Crawl-Budget Optimization: Prioritize Pages to Maximize Discovery

Why crawl budget matters

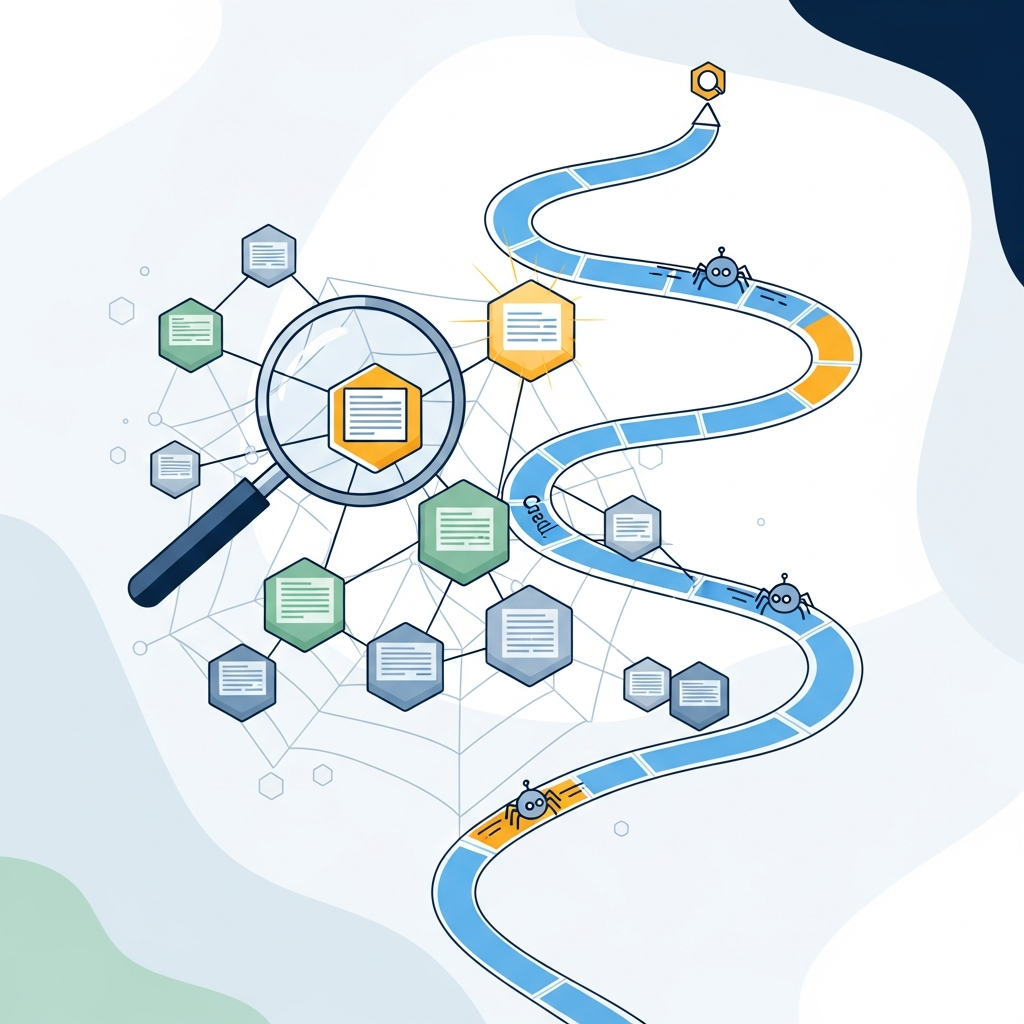

Search engines allocate a finite amount of crawler attention to each site. This budget governs how many pages can be crawled and re-crawled within a given timeframe. On large sites—think catalogs with thousands of product pages or publishers with vast archives—poorly managed crawl budgets can mean important pages wait longer to be discovered and indexed, while low-value pages monopolize crawl cycles.

Effective crawl-budget optimization focuses on ensuring crawlers spend their cycles on pages that contribute to your goals: high-value content, product pages with conversion potential, or category hubs that unlock deeper sections of your site. By directing crawl attention where it matters, you accelerate indexation for priority pages and reduce wasted resources on assets that don’t move the needle.

Think of crawl budget as a resource that you can steward. When you optimize for discovery rather than simply adding more pages, you create a scalable foundation for growth that compounds over time.

How crawlers allocate budget

Crawlers determine what to fetch based on signals that indicate potential value. The process blends site structure, signals from internal links, Sitemaps, canonicalization, and freshness. Important pages tend to attract more internal links and sit higher in the navigation, which in turn makes them easier to discover and re-crawl.

Several practical patterns influence allocation:

- Internal linking structure: Deeply nested pages receive fewer internal cues than hub pages with many links.

- Content freshness: Recently updated or newly published pages may be prioritized for indexing to reflect fresh information.

- Canonical and duplicate content: Non-canonical versions can siphon crawl budget away from the canonical pages.

- Robots meta and robots.txt: Signals that explicitly block crawlers can prevent budget waste on unneeded pages.

Understanding these signals helps you design crawl patterns that scale with site size while preserving indexation for the assets that matter most to users and business outcomes.

Sitemap optimization for discovery

A well-structured sitemap acts as a roadmap for crawlers. It doesn’t replace internal linking, but it can dramatically improve the probability that priority pages are noticed, especially after large site changes or migrations.

Best practices include:

- Segment large sites into multiple sitemaps (e.g., /sitemap-products.xml, /sitemap-articles.xml) and submit a sitemap index.

- Include only high-value pages in main sitemaps. Reserve less critical assets for later discovery or noindex where appropriate.

- Keep lastmod accurate to signal freshness and avoid stale entries.

- Ensure canonical URLs in sitemaps match the preferred versions on the site.

- Regularly audit sitemap coverage and remove dead or redirected pages.

In practice, combine sitemap optimization with a robust internal linking plan. Internal links guide crawlers to the pages that are also highlighted in the sitemap, creating a reinforcing signal pattern that accelerates discovery.

For a deeper dive into editorial workflows that support scalable sitemap-aware publishing, consider exploring resources like Editorial workflow for agencies and our platform overview at Asimpletool.

Prioritizing pages for crawling: signals and tactics

Prioritization is about ranking pages by expected business impact and crawl-worthiness. Use a practical framework to categorize pages into tiers, then align crawling and indexing actions with those tiers.

Tiering and signals

Tier 1: Core business assets with high conversion or traffic potential (homepage, category hubs, best-selling products, cornerstone articles). Tier 2: Supporting pages that drive long-tail traffic or signpost to Tier 1 assets (how-to guides, FAQ pages, resource hubs). Tier 3: Low-value or ephemeral pages (thin content, outdated landing pages, certain tag pages) that can be deprioritized or noindexed if they don’t contribute to goals.

Operational steps

1) Audit your crawlable surface and map pages to business value. 2) Build a link graph that reinforces Tier 1 pages. 3) Update internal linking to ensure Tier 1 pages appear in top navigational paths. 4) Use robots.txt and meta robots to control access for Tier 3 pages. 5) Refresh or prune old assets to free crawl budget for priority content.

Where to begin: start with your top product pages and highest-value blog posts, then extend to category hubs and guides. This gradual expansion prevents crawl-budget fragmentation and creates a scalable path to full-site coverage.

As you implement, monitor indexation changes in Google Search Console (Coverage reports, Indexing Errors, and Sitemaps) to verify that priority assets are indexed promptly.

Noindex low-value pages: when and how

Noindex is a powerful tool to prevent wasteful crawling and indexing of pages that don’t serve user needs or business goals. Common candidates include duplicate variations, pagination duplicates, tag/category pages with thin content, and search filters that create countless permutations.

Implementation tips:

- Meta robots noindex on identified pages, paired with a canonical link to the preferred version.

- Robots.txt disallow rules for high-volume, low-value pages that don’t need to be crawled at all.

- Consider parameter handling in your CMS to avoid creating crawlable duplicates.

Test changes in a staging environment if possible and monitor the impact on crawl statistics and indexation pace. Be careful not to remove pages that users actively visit or that provide critical context to other pages.

For a broader view of content- and crawl-focused optimization, you can refer to editorial workflows like the ones hosted at Editorial workflow for agencies and explore the platform at Asimpletool.

Internal linking prioritization: shaping crawl paths

Internal links are the primary signal crawlers use to navigate a site. A well-designed internal linking strategy ensures crawlers reach priority assets quickly and efficiently while distributing equity to important pages.

Best-practice techniques include:

- Adopt a hub-and-spoke model where Tier 1 pages act as hubs with multiple internal links from lower-tier pages.

- Use keyword-anchored links that reflect user intent and help search engines associate pages with the right topics.

- Limit excessive link depth by keeping most important pages within a few clicks from the homepage or main category pages.

- Regularly audit for broken links and orphaned pages to prevent crawlers from wasting time.

For large sites, consider a crawl-priority map that assigns a crawl weight to pages based on business value and link depth. This map can guide your sitemap updates, robots directives, and internal-link adjustments across teams.

Internal linking is a practical lever you can pull today. If you want a real-world blueprint, we’ve published step-by-step workflows in our blogs, including an example workflow at Editorial workflow for agencies.

Crawl signals and robustness: what helps crawlers discover essential pages

Crawlers rely on a mix of signals to identify value. Some signals are structural, like navigation and sitemaps; others are content-based, such as freshness and canonical integrity.

Key signals to optimize include:

- Clear navigation that surfaces Tier 1 pages in menus and breadcrumbs.

- Accurate canonical tags to prevent crawl budget fragmentation across duplicates.

- Structured data to help search engines understand page purpose and context.

- Accurate lastmod dates in sitemaps to indicate freshness.

- Robots directives that selectively exclude low-value content from crawling.

Combining these signals with a thoughtful internal linking strategy creates a robust crawl ecosystem where crawlers can efficiently discover the most important content and maintain up-to-date indexation.

For practical guidance on building scalable signal ecosystems, explore our resources and consider reviewing editorial workflows like the ones linked above.

A practical 30-step workflow to optimize crawl budget

- Audit crawlable surface: map pages, crawl paths, and identified duplicates.

- Define business-value tiers (Tier 1, Tier 2, Tier 3) based on conversions and engagement.

- Audit internal links to visualize crawl pathways and identify bottlenecks.

- Evaluate existing sitemaps: ensure coverage aligns with Tier 1 and Tier 2 pages.

- Tag low-value pages for noindex or disallow in robots.txt where appropriate.

- Prune or merge thin content that adds crawl noise without value.

- Update canonical tags for any duplicated assets.

- Segment sitemaps to reflect site hierarchy and avoid overly large files.

- Implement cross-domain linking if relevant and track impact across properties.

- Clean up broken links and redirect chains that slow discovery.

- Improve homepage and category hubs to act as robust crawl anchors.

- Enhance page-level signals with updated metadata and structured data.

- Refresh recently updated Tier 1 assets to signal freshness.

- Publish changes to staging for validation before production.

- Submit updated sitemaps to search engines and verify submission status.

- Monitor crawl stats in your analytics and search-console dashboards.

- Iterate with small, measurable changes and track indexation speed.

- Run a monthly crawl-budget health check to catch regressions.

- Document outcomes to inform cross-team planning.

- Lock in a repeatable quarterly crawl-optimization cadence.

For an example of how editorial workflows support large-scale optimization, see our resources at the links above and the homepage Asimpletool.

Tools, checklists, and common pitfalls

Key tooling categories:

- Site crawlers and log-file analyzers to see how search engines actually crawl your site.

- CMS plugins for sitemap generation, canonical management, and robots directives.

- Structured data validators to ensure markup is correct and scorable.

- Analytics dashboards that correlate crawl events with indexation and traffic trends.

Common pitfalls to avoid:

- Over-optimizing internal links in a way that creates artificial hierarchies.

- Noindexing critical assets or misconfiguring robots.txt, which can trap value behind gates.

- Ignoring log-file data and relying solely on crawler reports.

- Neglecting to update sitemaps after site changes, resulting in stale discovery signals.

For practical steps and examples, our blog sections provide deeper guidance, including editorial workflows at Editorial workflow for agencies and a broader platform overview at Asimpletool.

Measuring impact and ongoing optimization

Metrics to track include indexation rate, crawl coverage, time-to-index for priority pages, and changes in organic traffic for prioritized assets. Use a combination of Search Console signals, server logs, and internal analytics to triangulate results.

A practical approach:

- Baseline crawl stats: pages crawled per day, crawl errors, and average crawl depth.

- Indexation velocity: how quickly priority pages move from discovery to indexation.

- Coverage changes: number of valid indexed pages vs. exclusions after adjustments.

- Traffic attribution: organic visits to Tier 1 pages before and after changes.

Document learnings and refine your crawl-budget map quarterly. If you want a guided walkthrough, explore practical workflows in our resources and consider a consult for a tailored crawl-optimization plan.

For related insights, see the pilot workflow discussions in Editorial workflow for agencies and explore full platform capabilities at Asimpletool.

Quick-start checklist

- Audit your crawlable surface and identify Tier 1 assets.

- Audit and optimize your internal linking structure to support Tier 1 pages.

- Update sitemaps to reflect the hierarchy and priorities.

- Flag low-value pages for noindex or robots.txt disallow as appropriate.

- Validate canonical tags to prevent duplicate crawling of similar pages.

- Implement freshness signals on priority pages (updates, new content).

- Monitor indexation and crawl metrics after changes and adjust as needed.

If you’re seeking a hands-off approach to crawl-budget optimization, explore how automation platforms can streamline the steps above, including CMS integrations and scalable sitemap management. For more context, see our broader guides and case studies on related topics at Asimpletool.