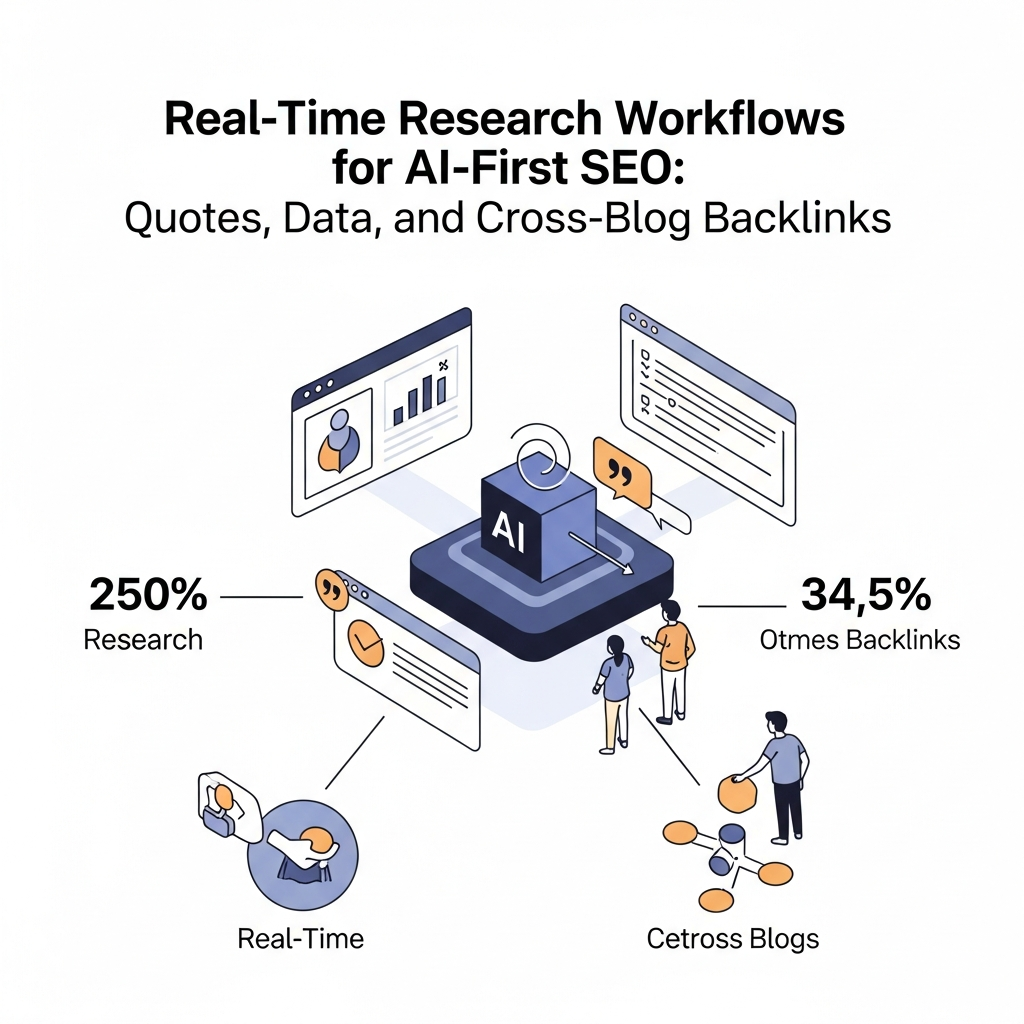

Real-Time Research Workflows for AI-First SEO: Quotes, Data, and Cross-Blog Backlinks

- What is Real-Time Research for AI-First SEO?

- Why It Matters for AI-First Search

- The Core Workflow: Data, Quotes, and Cross-Blog Backlinks

- Sourcing Data and Credible Quotes

- Building Networked Backlinks Across Blogs

- Schema Markup for LLM Optimization

- Real-Time Content Updates and Governance

- Data-Driven Content Briefs: Templates and Checklists

- Architecture Options: API Publishing and Cross-Platform Delivery

- Measurement, KPIs, and ROI

- Getting Started: A 30-Day Plan

What is Real-Time Research for AI-First SEO

Real-time research is a disciplined approach to sourcing fresh data, quotes from credible experts, and timely insights that inform content. When applied to AI-first SEO, it becomes a practical framework for creating content that feels current to both human readers and AI systems. The goal is to pair evergreen topics with dynamic signals—statistics, benchmarks, and expert perspectives that shift as new information becomes available.

In an AI-first world, search systems value content that demonstrates thought leadership, up-to-date data, and transparent sourcing. Real-time research helps you assemble content briefs that reflect recent developments, enabling faster indexing, higher relevance, and improved trust signals. This approach is not about chasing novelty for novelty’s sake; it’s about aligning data freshness with user intent and the evolving landscape of AI-assisted search.

Think of real-time research as a pipeline: source data, verify credibility, weave quotes, assemble cross-blog references, encode signals for LLMs, and continuously refresh outputs. The end result is a living piece of content that can be updated without compromising brand voice or governance.

Why It Matters for AI-First Search

AI-first search emphasizes how models interpret content and the signals they extract from it. Content built around real-time data tends to earn higher engagement, because readers sense relevance and authority. For marketers, this translates into better click-through, longer on-page time, and more robust scope signals for semantic understanding.

Real-time signals also help with cross-domain credibility. When articles reference current studies, dashboards, or expert quotes, search systems recognize the content as timely and trustworthy. This boosts the potential for long-tail visibility and increases the likelihood of higher-quality backlinks from credible sources.

Beyond search rankings, real-time research supports governance at scale. It creates repeatable processes that ensure content remains aligned with brand standards while staying responsive to new data. For teams managing multiple blogs or platforms, this approach reduces manual toil and accelerates content velocity across WordPress, Webflow, Shopify, and beyond.

The Core Workflow: Data, Quotes, and Cross-Blog Backlinks

The core workflow blends three pillars: (1) data-driven content foundations, (2) credible quotes and statistics, and (3) cross-blog backlink opportunities. When orchestrated well, these elements reinforce each other and create a resilient SEO signal set that scales with your organization.

Step one is to design a data-first content brief that anchors topics to real-time signals. Step two is to source quotes and statistics from verifiable sources. Step three is to identify cross-blog backlink opportunities that are contextually relevant and editorially safe. Step four is to encode relevant signals for LLMs so future iterations can reuse the same structure without starting from scratch.

In practice, teams build reusable templates: a data source matrix, an attribution ledger, and a link-network map. Each template supports a repeatable process that can be executed by editors, researchers, and automation layers without sacrificing quality or governance.

Sourcing Data and Credible Quotes

credible sourcing begins with a disciplined set of accepted sources, licenses, and attribution rules. A robust workflow collects data from peer-reviewed studies, official dashboards, government statistics, and industry benchmarks. Each data point should carry metadata: date, source, methodology, and a brief note on limitations. This metadata is essential for later updates and transparency.

When incorporating quotes, aim for statements that illuminate, not merely decorate the article. Quotes should come with attributions, brief context, and a clear connection to the topic. For AI-first content, embedding quotes strategically—paired with the underlying data—helps readers and models understand the factual basis of claims.

Practical tip: maintain a living quote library. Tag quotes by topic, credibility tier, and usage rights. This allows you to fetch relevant quotes for new posts quickly while keeping sourcing consistent and auditable.

Building Networked Backlinks Across Blogs

Backlinks remain a fundamental signal in SEO, but the value lies in quality, relevance, and editorial context. A networked backlinks strategy involves coordinating references across a set of adjacent blogs or partner sites in a way that makes sense for readers and search engines. The goal is not mass link-building; it’s building a credible map of related content that each article can reference naturally.

Key practices include: (a) identifying thematically aligned blogs, (b) crafting content briefs that invite cross-references rather than forced mentions, and (c) coordinating anchor text that remains faithful to the topic and user intent. Maintain editorial control to prevent link schemes, ensure disclosures when needed, and preserve content integrity.

Operationally, create a backlink ledger: topics, target posts, proposed anchor text, publication date, and attribution notes. This ledger becomes a living document that guides outreach and cross-link integration while preserving governance standards.

Schema Markup for LLM Optimization

Schema markup helps search engines and language models understand structure, relationships, and intent. For AI-first SEO, markup supports promptable signals that LLMs can leverage when generating or updating content. Practical schemas include Article, Organization, Dataset, and Question schemas, augmented with real-time data timestamps and source references.

Beyond standard schemas, consider lightweight, machine-readable annotations that flag data freshness, source credibility, and quote attribution. These annotations can be consumed by internal tooling or fed into your content management workflow to guide updates and keep the content aligned with evolving data.

Remember: schema is a signal, not a substitute for quality writing. Use it to augment clarity and traceability while preserving a natural, reader-first narrative.

Real-Time Content Updates and Governance

Real-time updates require a governance model that balances speed with brand consistency. Establish a cadence for updates (e.g., quarterly refreshes plus event-driven changes) and define who can approve updates and what triggers a revision. Clear ownership prevents drift and preserves editorial voice across multiple authors and platforms.

Automation can handle routine refreshes, such as updating statistics from a live dashboard or adding a newly quoted expert. However, human review remains essential for interpretive changes, re-framing, or when new data contradicts prior conclusions. Build guardrails that require human validation for any substantive claim or change in narrative direction.

Effective governance includes versioning, changelogs, and rollback capabilities. When a data point is updated, log the source, date, and rationale so readers can trace the evolution of a story over time.

Data-Driven Content Briefs: Templates and Checklists

A data-driven brief is the blueprint for AI-ready content. It combines topic scope, data sources, required quotes, target audience notes, and backlink opportunities. A strong brief reduces ambiguity, accelerates production, and supports consistent governance across writers and editors.

Core components include: (a) objective and user intent, (b) real-time data requirements with source telemetry, (c) quotes and attribution plan, (d) cross-blog reference map, (e) LLM-friendly prompts and schema annotations, (f) update schedule and governance rules, and (g) acceptance criteria and QA steps.

Example checklists: source verification checklist, quote attribution checklist, backlink relevance checklist, update readiness checklist, and editorial tone consistency checklist. Use them as embedded prompts in your CMS or content automation layer so every article follows the same rigorous standard.

Architecture Options: API Publishing and Cross-Platform Delivery

Real-time research workflows benefit from a modular architecture that supports API publishing and multi-platform delivery. A practical setup might include a research ingestion layer, a data verification module, a quotes and references engine, a backlink orchestration module, and an output layer that pushes content to WordPress, Webflow, or Shopify via API.

Option A is a centralized CMS-first approach: all data, quotes, and backlinks are prepared in a single system and published through API endpoints to each destination. Option B is a decoupled pipeline: a research microservice feeds content to independent CMS instances, allowing platform-specific optimization while preserving a common data model.

Whichever path you choose, enforce authentication, audit trails, and role-based access to protect data integrity. Include a content governance layer that enforces tone, style, and brand guidelines across all channels.

Measurement, KPIs, and ROI

Define success with a concise set of metrics that align with your goals: organic traffic growth, time-to-index, click-through rates on AI-first results, and backlink quality scores. Track data freshness and quote usage as indicators of content credibility. For AI-first content, monitoring model-assisted engagement and structured data signals can reveal how effectively the content supports AI-driven search and knowledge extraction.

Establish a simple dashboard that shows: data freshness score, quote attribution accuracy, backlink network health, and schema completeness. Use this dashboard to guide quarterly refreshes and to justify investments in data pipelines and governance tools.

Cost considerations matter too. Evaluate the ROI of automating data sourcing and publishing against the incremental lift in traffic, engagement, and downstream conversions. A disciplined approach helps you scale confidently without sacrificing quality.

Getting Started: A 30-Day Plan

Day 1-5: Define the content scope and identify core topics that benefit most from real-time data. Establish data sources, quotes, and initial backlinks map. Create a data-driven brief template and governance rules.

Day 6-12: Build the quotes library and source verification processes. Start collecting live data and map early cross-blog references. Set up schema scaffolding and plan initial CMS API integrations.

Day 13-20: Launch the first pilot post with real-time data, quotes, and backlinks. Implement update triggers and QA checks. Begin tracking the defined metrics and adjust prompts for AI-friendly outputs.

Day 21-30: Expand to a small content set, refine governance, and establish a repeatable weekly cadence for data refreshes and updates. Prepare a case study or two to illustrate early impact and refine your long-term plan.

Tips for success: keep your prompts concise, maintain attribution discipline, and ensure your teams synchronize on tone and voice across platforms. Real-time research is iterative; expect occasional reworks as data evolves.

As you implement real-time research workflows, consider how this approach fits your broader content strategy. The combination of credible quotes, timely data, and cross-blog references creates a richer reader experience and stronger AI signals. With governance baked in and a repeatable process, your content can scale without losing the human touch that makes content trustworthy and useful.